Edge computing vs cloud computing is one of the most important comparisons in today’s rapidly evolving digital landscape. As businesses generate massive amounts of data, deciding where that data should be processed—locally or in centralized servers—has become a critical strategic decision. Both technologies offer unique advantages, and understanding their differences can help organizations build faster, smarter, and more efficient systems.

With the rise of IoT devices, artificial intelligence, and real-time applications, companies are increasingly adopting hybrid infrastructures that combine the strengths of both edge and cloud computing.

For more insights into modern digital infrastructure, explore this guide:

👉 https://theempiremagazine.com/?p=6175

What Is Edge Computing?

Edge computing is a distributed computing model where data is processed closer to its source rather than being sent to a centralized system. This means that devices like sensors, cameras, and local servers handle data processing at the “edge” of the network.

This approach reduces the time it takes for data to travel, making it ideal for real-time applications where speed is critical.

Key Features of Edge Computing:

- Localized data processing

- Reduced latency

- Real-time decision-making

- Lower bandwidth usage

For example, in autonomous vehicles, edge computing allows instant processing of sensor data to make split-second driving decisions without relying on distant servers.

What Is Cloud Computing?

Cloud computing delivers computing services such as storage, processing power, and databases over the internet. Instead of managing physical infrastructure, businesses can access scalable resources hosted in remote data centers.

Cloud computing operates on a centralized model and is widely used due to its flexibility, scalability, and cost-efficiency.

Common Cloud Service Models:

- Infrastructure as a Service (IaaS)

- Platform as a Service (PaaS)

- Software as a Service (SaaS)

- Function as a Service (FaaS)

Cloud platforms are ideal for handling large-scale data processing, application hosting, and global collaboration.

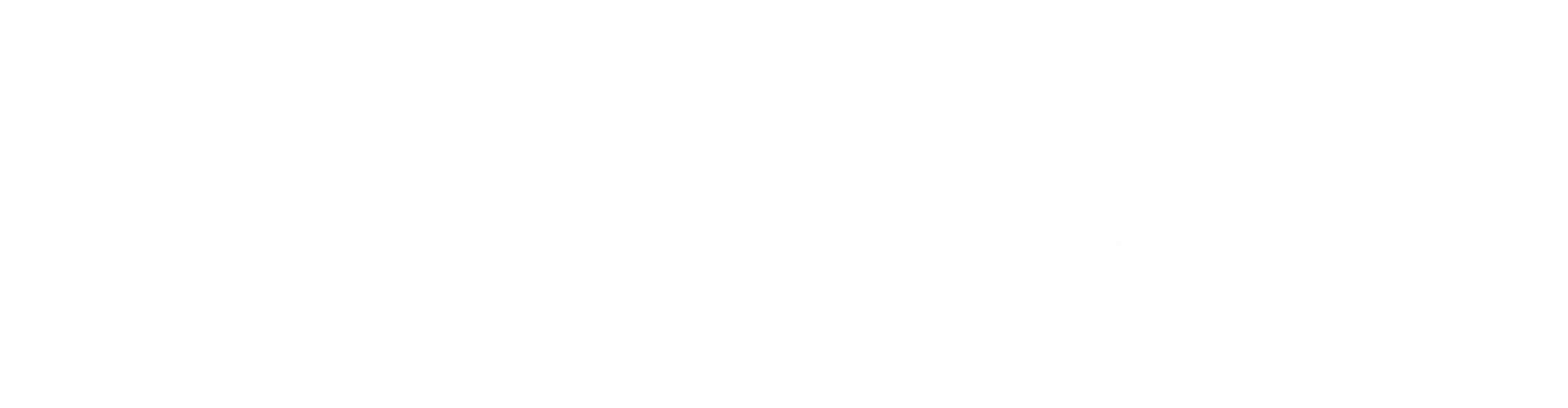

Edge Computing vs Cloud Computing: Key Differences

1. Architecture

- Edge Computing: Decentralized and distributed

- Cloud Computing: Centralized data centers

Edge computing processes data locally, while cloud computing relies on remote infrastructure.

2. Latency and Speed

- Edge: Ultra-low latency with real-time processing

- Cloud: Higher latency due to data transmission

Applications like healthcare monitoring and robotics benefit significantly from edge computing’s speed.

3. Scalability

- Edge: Limited scalability, requires physical deployment

- Cloud: Highly scalable with on-demand resources

Cloud computing is better suited for businesses expecting rapid growth and fluctuating workloads.

4. Cost Structure

- Edge: Requires upfront hardware investment but reduces bandwidth costs

- Cloud: Pay-as-you-go model with potential data transfer costs

Each model has cost advantages depending on the use case.

5. Security and Privacy

- Edge: Data remains local, improving privacy

- Cloud: Advanced security systems but centralized data storage

Organizations handling sensitive data may prefer edge computing for enhanced control.

6. Connectivity

- Edge: Works even with limited or no internet

- Cloud: Requires stable internet connectivity

Edge computing is ideal for remote or offline environments.

Advantages of Edge Computing

Edge computing is gaining popularity due to its ability to handle time-sensitive operations.

Key Benefits:

- Faster response times

- Real-time analytics

- Reduced network congestion

- Improved reliability

- Enhanced data privacy

Industries like manufacturing, healthcare, and smart cities rely heavily on edge computing.

Advantages of Cloud Computing

Cloud computing remains the backbone of modern IT systems due to its flexibility and scalability.

Key Benefits:

- On-demand scalability

- Cost efficiency

- Global accessibility

- Centralized management

- Advanced analytics and AI tools

Businesses can quickly deploy applications and scale operations without heavy infrastructure investments.

Real-World Use Cases

Edge Computing Applications:

- Autonomous vehicles for real-time navigation

- Smart traffic systems for congestion control

- IoT devices like smart home systems

- Industrial automation for predictive maintenance

Cloud Computing Applications:

- Online learning platforms

- Healthcare data management

- Software development and testing

- Big data analytics and machine learning

Edge vs Cloud: Which One Should You Choose?

The choice between edge computing and cloud computing depends on your specific business needs.

Choose Edge Computing If:

- You need real-time processing

- Your systems operate in remote locations

- Low latency is critical

Choose Cloud Computing If:

- You need scalability and flexibility

- You handle large volumes of data

- Global accessibility is important

Hybrid Approach: The Future of Computing

Modern businesses are increasingly adopting a hybrid model that combines both edge and cloud computing.

Benefits of Hybrid Model:

- Faster performance through local processing

- Reduced operational costs

- Improved scalability

- Better data management

In this approach, data is processed at the edge for speed and then sent to the cloud for storage and advanced analysis.

Why This Comparison Matters in 2026

As digital transformation accelerates, organizations face increasing demands for speed, efficiency, and security. Technologies like AI, IoT, and automation require both real-time processing and large-scale data handling.

This makes understanding edge computing vs cloud computing essential for staying competitive. Businesses that effectively integrate both technologies can improve performance, reduce costs, and enhance user experiences.

Stay Connected for More Insights:

📸 Instagram: https://www.instagram.com/the_empire_magazine/

📘 Facebook: https://www.facebook.com/profile.php?id=61573749076160

– The Empire Magazine

Crown For Global Insights